Researchers led by Professor Dian Tjondronegoro of Griffith University in South East Queensland, Australia have created an artificial intelligence (AI) video surveillance system that determines if social distancing breaches occur in an airport or other places without using anyone's personal data. The system uses a local network of cameras and keeps image processing gated so that no private data needs to be stored.

The research was led by Professor Tjondronegoro, the acting Head of the Department of Business Strategy and Innovation at Griffith University in Australia. He is also the Chief Investigator of a project called ARC Discovery, which set out to determine the "effectiveness of activity based work environment to promote workers' productivity and wellbeing." His expertise lies in digital innovation, artificial intelligence, and "intelligent systems for transforming the future of business, work, productivity, health and well being," which is why it is unsurprising that the surveillance system was one of his projects.

According to Tech Xplore, the new AI to determine if people are breaking social distancing rules was developed with data privacy in mind and that according to Professor Tjondronegoro, "These adjustments are added to the central decision-making model to improve accuracy."

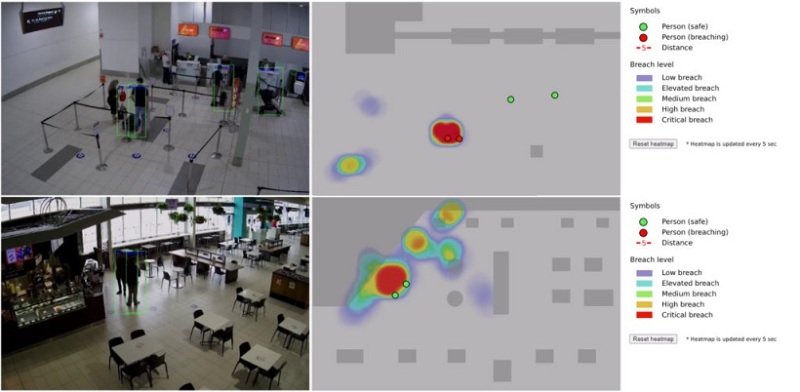

The case study conducted on the Gold Coast Airport was published in Information, Technology & People. It studied the flow of people in the airport before the pandemic hit, analyzing its 6.5 million passengers annually with 17,000 passengers on-site daily. This was done through the airport's hundreds of cameras that cover 290,000 square meters of space. Researchers used several cutting-edge algorithms using nine cameras in three related case studies that tested automatic people detection, automatic crowd counting and social distance breach detection.

"Our goal was to create a system capable of real-time analysis with the ability to detect and automatically notify airport staff of social distancing breaches," Professor Tjondronegoro explained. Researchers found through three cameras that were used to track social distancing breach that camera angles affected the ability of the AI program to detect and track people's movements. They recommended having cameras angeled between 45 to 60 degrees.

"The system can scale up in the future by adding new cameras and be adjusted for other purposes," Professor Tjondronegoro explained. "Our study shows responsible AI design can and should be useful for future developments of this application of technology."

Researchers stressed that data privacy was of utmost concern, which is why people were represented as red or green dots in the AI program and not actual images of the person. The green dots would turn red if they breached social media rules.

Despite this, The Post Millennial took to Twitter to decry this new technology, calling it "dystopian," largely because people are monitored for their physical proximity to one another, regardless whether people are with their family or friends, or are simply beside strangers.in a public place.

DYSTOPIAN: Australian university develops technology to track social distancing, portraying people as "dots on a screen" that turn red when there's a "breach" of the rules. pic.twitter.com/HpslvXDB21

"” The Post Millennial (@TPostMillennial) August 3, 2021

Surveillance technology may be new to Australia, but it is not in China, where the Chinese Communist Party (CCP) uses an expansive and pervasive system to "safeguard the regime," The Diplomat reported in April. The report said that Chinese authorities are now "testing artificial intelligence-powered analytics, which purport to predict unrest before it occurs."